The Three Pillars of Modern Distributed Communication: An Architectural Analysis of HTTP, WebSocket, and gRPC

1. Introduction: The Evolution of Connected Systems

Over the past two decades, driven by massive scale, real‑time interaction, and ubiquitous connectivity, distributed system architectures have been completely reshaped.

In the early days of the World Wide Web, the paradigm was simple: the client requested a document and the server returned that document. This request–response model, wrapped in the Hypertext Transfer Protocol (HTTP), was essentially like pulling a file out of a filing cabinet.

As software evolved from static information repositories into dynamic, living applications—spanning complex microservice ecosystems, high‑frequency trading platforms, and immersive social experiences—the limitations of this single communication model became obvious.

Today, modern system architects are primarily choosing among three dominant communication protocols:

- HTTP – ubiquitous, steadily evolving toward HTTP/3

- WebSocket – persistent, bidirectional, full‑duplex

- gRPC – high‑performance, contract‑driven RPC

Protocol choice is no longer a trivial implementation detail. It is a foundational architectural decision that determines:

- The latency distribution of the entire system

- The upper bounds on scalability

- The operational complexity of the platform

- The battery and bandwidth efficiency of mobile clients

When protocols and use cases are mismatched, the result can be catastrophic technical debt:

- “Thundering herd” failures at load balancers

- Excessive battery drain on mobile devices

- Unmanageable cascading latency across a microservice mesh

This article provides a systematic analysis of these three paradigms, going far beyond “which is faster.” It focuses on:

- Transport‑layer and multiplexing mechanics

- Serialization formats (JSON vs Protobuf) and their performance / evolution tradeoffs

- Browser and network‑infrastructure constraints

- Security models and attack surfaces

- Operational and observability implications

It is written for architects, technical leads, and senior engineers who need a practical decision guide for protocol selection in modern networked systems.

2. HTTP: From Stateless Text to Binary Streams

To understand the differences between WebSocket and gRPC, you first have to understand the evolution of HTTP itself. HTTP is both the “assembly language” of the Web and the foundation on which many higher‑level protocols are built.

2.1 HTTP/1.1: A Text‑Based Legacy

For nearly twenty years, HTTP/1.1 has been the undisputed carrier of web traffic. Its design philosophy favors human readability and implementation simplicity over machine efficiency.

- Text protocol – HTTP/1.1 messages are ASCII text streams separated by newlines, which makes it easy to debug via Telnet or

curl. - Stateless model – each request is semantically independent and does not rely on previous interactions.

Key limitations of HTTP/1.1:

-

Application‑layer Head‑of‑Line (HoL) blocking

On a single TCP connection, the client must wait for the full response to a request before sending the next request. This creates a “convoy effect”: one long‑running response blocks all subsequent requests behind it. -

Multi‑connection “brute‑force” parallelism

To mitigate HoL blocking, browsers adopted domain sharding and opened ~6 parallel TCP connections per origin. This:- Multiplies the number of TCP three‑way handshakes

- Multiplies TLS handshakes and session management

- Multiplies congestion‑control and slow‑start state

-

Verbose and repetitive headers

Every request must carry cookies, User‑Agent, Accept headers, etc. In a deep microservice call chain, large amounts of trace IDs and auth metadata are propagated hop by hop, and the size of metadata often exceeds the size of the business payload itself.

2.2 HTTP/2: The Binary Revolution

Standardized in 2015, HTTP/2 retains HTTP semantics (methods, status codes, URIs) but completely replaces the on‑the‑wire encoding to fix the inefficiencies of HTTP/1.1.

-

Binary framing layer

Communication is no longer plain text; it is split into binary frames with specific purposes:HEADERS– carry request/response headersDATA– carry the message bodyRST_STREAM, etc. – manage errors and control

-

Multiplexing and streams

A single TCP connection can carry many independent logical streams, each representing one request/response conversation. Frames from different streams are interleaved on the same connection:- Eliminates application‑layer HoL blocking

- Avoids the cost of many parallel TCP connections

- Allows a single connection to saturate available bandwidth

-

HPACK header compression

HTTP/2 uses static and dynamic header tables so that subsequent requests only send indices into these tables instead of full strings. This dramatically reduces repeated headers (Authorization, Trace‑Id, User‑Agent, etc.), especially in microservice environments with deep call chains.

2.3 HTTP/3 and QUIC: Fixing Transport‑Layer HoL

Although HTTP/2 fixes application‑layer HoL blocking, all streams still share a single TCP connection. If any packet is lost, the OS TCP stack must pause delivery of subsequent packets until the missing packet is retransmitted—even if those packets belong to unrelated streams. This is transport‑layer HoL blocking.

HTTP/3 solves this by building on QUIC over UDP:

-

User‑space reliability and congestion control

QUIC moves what used to be in the kernel’s TCP stack into user space, allowing more rapid iteration and better control. -

Stream‑level independence

Packet loss only affects the stream that lost packets; other streams continue unhindered. This is ideal for:- Unstable cellular networks

- Devices frequently switching between Wi‑Fi and 5G

gRPC over HTTP/3 (gRPC over QUIC) will significantly improve the mobile experience and is a key direction for the coming years.

3. WebSocket: A Persistent Full‑Duplex Channel

HTTP/2 makes resource fetching more efficient, but it doesn’t change the fundamental communication model of the Web: clients initiate, servers respond. Scenarios that truly need real‑time, event‑driven communication—chat, collaborative editing, game state synchronization—must break out of this pattern.

WebSocket (RFC 6455) was created for exactly this purpose.

3.1 The Upgrade Mechanism

A WebSocket connection starts life as a normal HTTP/1.1 request and “upgrades” the protocol. This bootstrapping mechanism allows it to work with existing intermediaries like load balancers and proxies.

GET /chat HTTP/1.1

Host: server.example.com

Upgrade: websocket

Connection: Upgrade

Sec-WebSocket-Key: dGhlIHNhbXBsZSBub25jZQ==

Sec-WebSocket-Version: 13Key points:

- The client sends a random

Sec-WebSocket-Key. - The server appends the magic GUID

258EAFA5-E914-47DA-95CA-C5AB0DC85B11, computes a SHA‑1 hash, base64‑encodes it, and returns it inSec-WebSocket-Accept. - On success, the server responds with

101 Switching Protocols.

After the handshake:

- HTTP semantics are discarded

- The same TCP connection is upgraded to the WebSocket framing protocol

- Both sides obtain a raw, full‑duplex binary/text channel

3.2 Framing and Efficiency

WebSocket frames are intentionally lightweight:

- A small header (2–14 bytes)

- Followed by the payload (text or binary)

Important fields:

- FIN bit – indicates whether this is the final fragment of a message

- Opcode – indicates frame type (text, binary, ping, pong, close)

- Masking – client‑to‑server messages must be XOR‑masked with a 32‑bit key to mitigate cache poisoning attacks

Compared to HTTP, which parses full headers for every message, WebSocket’s marginal cost for sending tiny messages is extremely low. This makes it the de‑facto standard for:

- Instant messaging and chat

- Multiplayer games

- Collaborative whiteboards and document editing

—all scenarios that require low‑latency, high‑frequency interactions.

3.3 Lack of Application Semantics

WebSocket’s biggest strength is also its greatest weakness: it is just an unstructured pipe.

It does not provide standard notions of:

- Routing

- Metadata models

- Application‑level status codes

- Error semantics

As a result, developers must create their own subprotocols:

- Often by wrapping messages in JSON, for example:

{ "type": "chat_message", "roomId": "123", "body": "hello" }- Application‑level error handling is completely custom, unlike HTTP’s standardized 4xx/5xx status codes.

This yields flexibility but also:

- Many teams reinventing incompatible protocols

- No schema enforcement, leading to brittle versioning and compatibility issues

4. gRPC: Structured Remote Procedure Calls

gRPC can be thought of as an RPC framework for the cloud‑native era. Designed and open‑sourced by Google, it aims for:

- High performance and low latency

- Strong typing and contract‑first development

- Multi‑language interoperability

4.1 IDL and Protocol Buffers

At the core of gRPC is the contract‑first philosophy: before writing any code, you define services and message types using an Interface Definition Language (IDL), most commonly Protocol Buffers (Protobuf).

syntax = "proto3";

service PaymentService {

rpc ProcessPayment (PaymentRequest) returns (PaymentResponse);

}

message PaymentRequest {

string user_id = 1;

double amount = 2;

string currency = 3;

}This .proto file is the single source of truth:

- The

protoccompiler can generate:- Client stubs

- Server skeletons for Go, Java, Python, C++, Node.js, and more.

- Both sides get compile‑time type checking, eliminating many of the serialization and missing‑field bugs that are common with ad‑hoc JSON/REST APIs.

4.2 Protobuf Serialization

gRPC’s efficiency largely comes from Protobuf’s binary serialization:

-

No repeated field names

JSON repeats field names like"user_id"in every message. Protobuf sends small integer tags (e.g.,1), and the receiver uses the precompiled schema to interpret them. -

Varint encoding

Integers use variable‑length encoding: small values occupy fewer bytes. -

ZigZag encoding

Signed integers are mapped to unsigned numbers in a way that keeps small negatives small as well, allowing efficient varint encoding.

The result:

- Messages are typically 60–80% smaller than equivalent JSON

- Parsing avoids string scanning and UTF‑8 validation; CPU overhead is much lower

For high‑QPS microservices, this means the same hardware can handle many more requests.

4.3 HTTP/2‑Based Streaming Model

gRPC is built entirely on HTTP/2. Each RPC call maps to an HTTP/2 stream.

It supports four interaction patterns:

-

Unary RPC

One request, one response—similar to a traditional function call. -

Server streaming

The client sends one request, and the server sends back a stream of messages on the same HTTP/2 stream—ideal for:- Subscriptions

- Paging or chunked downloads

-

Client streaming

The client sends a stream of messages, and the server responds once—useful for:- Uploading large files

- Sending batched telemetry

-

Bidirectional streaming

Both client and server send streams of messages independently on the same HTTP/2 stream. This provides WebSocket‑like capabilities but:- Retains strong typing and schemas

- Reuses HTTP/2 multiplexing and header compression

5. Performance Characteristics

Theoretical protocol differences ultimately show up in production as observable latency and resource‑usage differences.

5.1 Throughput and Payload Efficiency

In data‑heavy microservice scenarios, two bottlenecks dominate:

- Bandwidth consumption

- CPU time spent on serialization and deserialization

Typical findings:

-

Payload size

For the same business object:- JSON (uncompressed) > JSON (gzip) > Protobuf

- Protobuf is often another 30–50% smaller than gzipped JSON

-

Serialization speed

Benchmarks in Go and Java commonly show Protobuf encoding/decoding to be 3–7× faster than JSON.

At six‑figure RPS levels, this translates into:- Lower CPU usage

- Smaller cluster sizes

- Direct infrastructure cost savings

5.2 Latency Profiles

Looking at end‑to‑end latency for a single request:

-

WebSocket

- Once a connection is established, marginal overhead per message is minimal (2–14 bytes of header)

- No HTTP semantic parsing; ideal for real‑time messaging

-

gRPC

- HTTP/2 multiplexing, header compression, and persistent connections

- Significantly faster than REST over HTTP/1.1

- Slightly higher overhead than a raw WebSocket, but with strong typing and rich semantics

-

REST (HTTP/1.1 + JSON)

- If connection reuse/Keep‑Alive is not carefully managed, frequent TCP three‑way handshakes and TLS handshakes add large fixed costs

- Text parsing and verbose headers add further latency

5.3 Mobile Battery and Radio States

On mobile devices, battery usage is dominated by the wireless radio (cellular/Wi‑Fi), which has power states and “tail times”:

- Switching into high‑power mode (DCH) costs energy

- After data transmission, the radio stays in higher‑power states for some time before tailing off

Protocol behaviors:

-

WebSocket

- Typically requires periodic heartbeats (e.g., every 30 seconds) to avoid NAT timeouts

- Keeps the radio in more active states; worse for battery life

- But necessary for “always‑online” experiences like chat

-

gRPC (Unary + multiplexing)

- Requests are sent in short, intense bursts

- A single connection is multiplexed across many RPCs, avoiding the “connection storm” of HTTP/1.1

- The radio can drop back to low‑power states more quickly

-

REST/HTTP/1.1

- Multiple connections + larger payloads + slower processing keep the radio in high‑power states longer

6. Implementation Ecosystem and Browser Constraints

Protocol specifications are only half the story; implementation and platform constraints matter just as much.

6.1 Why gRPC‑Web Exists

Browsers provide:

- High‑level HTTP APIs:

fetch/ XHR - A WebSocket API:

new WebSocket(url) - But no low‑level access to HTTP/2 or HTTP/3 frames

Standard gRPC implementations rely on:

- HTTP/2 streams

- Trailers for status codes and rich metadata

This cannot be done directly from a browser, which led to gRPC‑Web:

- A code‑generated browser client (via

protoc-gen-grpc-web) - Encodes gRPC requests into HTTP/1.1 or HTTP/2 requests that browsers can send

- A server‑side proxy (Envoy, Nginx, Go/Node middleware) translates these into native gRPC for backend services

Trade‑offs:

- Adds an extra component that must be scaled and operated

- Historically lacked full parity with native gRPC (e.g., missing client‑streaming support, now improving with modern Fetch APIs)

6.2 The Connect Protocol: Unifying Browser and Backend

To reduce gRPC‑Web friction, Buf introduced the Connect protocol:

- A single service can be exposed as:

- Native gRPC (for internal services)

- gRPC‑Web (for legacy compatibility)

- Connect (a simple, POST‑based protocol)

- Works over HTTP/1.1, HTTP/2, and HTTP/3

- Uses JSON or Protobuf bodies that are easy to inspect in browser dev tools

This gives a unified API surface across browsers, backends, and gateways.

6.3 Language Ecosystems

-

gRPC

- First‑class support in Go, Java, C++, Python, and others

- Libraries are maintained by Google and CNCF; quality and performance are high

-

WebSocket

- Available in virtually every language, but quality varies

- Node.js:

wsandsocket.ioare de‑facto standards;socket.ioadds auto‑reconnect and long‑polling fallbacks - Go:

gorilla/websocketandnhohr/websocketare commonly used

7. Architectural Patterns and Topologies

Protocol choices directly shape system architecture and deployment topology.

7.1 Backend for Frontend (BFF) Pattern

Typical scenario

- Internal microservices use gRPC for efficiency and strong typing

- Frontends (especially browsers) need real‑time data but:

- Have limited support for native gRPC

- Need to aggregate data from multiple backends

Solution: a dedicated BFF service

- Deployed at the edge:

- Talks to frontends via WebSocket, Server‑Sent Events (SSE), or GraphQL subscriptions

- Talks to backends via gRPC

- Responsibilities:

- Protocol translation (gRPC ⇄ WebSocket/HTTP)

- Data aggregation and shaping (to avoid frontend N+1 request patterns)

When a backend service like OrderService pushes updates over a gRPC stream, the BFF decodes Protobuf messages and pushes JSON events over WebSocket to the browser.

7.2 Gateway Aggregation Pattern

In a microservice architecture, a “load dashboard” operation might depend on:

- A user service

- A billing service

- A notifications service

Strategies:

-

Direct REST fan‑out from the client

- The client issues three parallel HTTP requests

- On high‑latency mobile networks, this is chatty and inefficient

- The client must orchestrate retries and error handling

-

gRPC + API gateway

- The client makes a single gRPC call (e.g.,

GetDashboard) - The gateway fans out to multiple internal gRPC services inside the data center

- It aggregates results and returns a single combined response

- The client makes a single gRPC call (e.g.,

This:

- Moves complexity into a low‑latency, controlled network environment

- Reduces client round‑trips and simplifies frontend logic

7.3 Hybrid Protocol Gateways

Modern API gateways (Kong, Gloo, Envoy, etc.) often:

- Accept external REST/JSON requests

- Translate them into internal gRPC calls

- Provide optional WebSocket/HTTP/2 channels for streaming scenarios

This enables an “gRPC‑first internally, REST‑compatible externally” strategy without duplicating business logic.

8. Operational Complexity: Infrastructure and Observability

Protocols affect not only developer experience but also the day‑to‑day life of SREs and operators.

8.1 Load Balancing: L4 vs L7

-

HTTP/1.1 (short‑lived or limited Keep‑Alive)

- Stateless, short‑lived connections

- L4 load balancers (distributing TCP connections) are sufficient and yield relatively even load

-

gRPC (long‑lived, multiplexed connections)

- A client may open a single TCP connection and send tens of thousands of RPCs over it

- A pure L4 load balancer will pin that connection to one backend instance:

- One node becomes hot

- Others sit idle

- Requires L7 load balancing:

- Terminate HTTP/2 at the load balancer

- Inspect streams and frames

- Redistribute at the RPC level

-

WebSocket

- Strongly stateful and long‑lived

- You can’t load‑balance within a WebSocket stream; a client’s connection is effectively pinned to a single server

- During autoscaling, existing connections are hard to migrate, leading to hot spots

8.2 Debugging and Troubleshooting

-

HTTP/JSON

- Browser dev tools, Postman, and

curlmake it trivial to inspect and replay requests - Packet capture tools can easily read the plaintext

- Browser dev tools, Postman, and

-

WebSocket

- Browser dev tools have native support for inspecting frames

- Charles, Fiddler, and similar proxies can intercept and decode WebSocket traffic

-

gRPC

- On‑the‑wire traffic is binary Protobuf; without

.protofiles, it is opaque - Debugging relies on:

- CLI tools like

grpcurl(using gRPC Reflection) - GUI clients like Postman’s gRPC support or Insomnia

- Wireshark with Protobuf dissectors and schemas configured

- CLI tools like

- On‑the‑wire traffic is binary Protobuf; without

8.3 Error Models and Status Code Mapping

gRPC and HTTP have different error spaces:

- gRPC: enums like

NOT_FOUND,ALREADY_EXISTS,DATA_LOSS - HTTP: integer status codes like

404,409,500

When exposing gRPC through a REST gateway, you need a consistent mapping policy, for example:

INVALID_ARGUMENT→400 Bad RequestUNAUTHENTICATED→401 UnauthorizedPERMISSION_DENIED→403 ForbiddenDATA_LOSS→500 Internal Server Error

Without a standard mapping, different teams may make inconsistent choices, complicating client logic.

9. Security Considerations

9.1 Cross‑Site WebSocket Hijacking (CSWSH)

By default, WebSocket does not enforce the Same‑Origin Policy (SOP).

Attack scenario:

- The user is logged into

bank.com - The user visits a malicious site

evil.com - JavaScript on

evil.comopens a WebSocket towss://bank.com/account - The browser automatically includes

bank.comcookies - If the server only checks cookies and not the

Originheader, it may accept the connection and hand control of the socket to the attacker

Mitigations:

- Strictly validate the

Originheader during the handshake - Prefer token‑based authentication (short‑lived tokens in headers or subprotocol parameters) over implicit cookie‑based auth

9.2 The “Rapid Reset” Attack (CVE‑2023‑44487)

In 2023, a critical HTTP/2 vulnerability was disclosed that exploited its multiplexing features:

- Attackers repeatedly open streams and immediately send

RST_STREAMframes - The server allocates resources for each stream but quickly discards them

- At high rates, this can exhaust CPU handling stream bookkeeping without consuming much bandwidth

Impact on gRPC:

- Because each gRPC call is an HTTP/2 stream, gRPC services are naturally vulnerable to this pattern

Mitigations:

- Envoy, Nginx, and gRPC libraries have added limits on the rate of stream resets

- Servers can aggressively block clients exhibiting suspicious reset patterns

9.3 Authentication Patterns

-

gRPC

- Built with “zero trust” in mind:

- Call credentials – attach JWTs or other tokens in per‑call metadata

- Channel credentials – mTLS for mutual authentication and encryption

- In service meshes (Istio, Linkerd), mTLS is often enforced transparently by sidecars

- Built with “zero trust” in mind:

-

WebSocket

- The protocol itself says little about authentication

- Common anti‑patterns:

- Putting tokens in query strings (leaked via logs or proxies)

- Relying solely on cookies and not validating

Origin

- Safer patterns:

- Use short‑lived tokens

- Pass them in HTTP headers during the upgrade and validate centrally

10. Industry Case Studies

10.1 Uber: From REST to gRPC

Background

- Thousands of microservices communicating via JSON/HTTP

- Excessive CPU spent on serialization

- High latency and frequent type mismatches between services

Migration

- Broad adoption of gRPC + Protobuf across internal services

- Centralized IDL and schema management to enforce cross‑team contracts

- Edge gateways to accept mobile JSON requests and transcode them into gRPC

Results

- Significant reductions in bandwidth and latency

- At the cost of operating complex custom gateways and IDL repositories

10.2 Slack: A WebSocket‑Powered Real‑Time Platform

Slack’s core value proposition is real‑time collaboration.

-

High‑level architecture:

- Clients maintain a persistent WebSocket connection to a “gateway server”

- When a user sends a message, it is first delivered via HTTPS to a web application for reliable ingestion

- The web app writes the message to a message queue

- Gateway servers subscribe to the queue and push messages over WebSocket to all active clients in the relevant channel

-

Why not gRPC to the browser?

- Slack must push data in real time to millions of enterprise users

- It has to work through corporate firewalls and HTTP proxies

- Browsers natively support WebSocket but have limited support for native gRPC

- WebSocket uses an HTTP/HTTPS upgrade handshake, which is far more likely to pass through strict network boundaries

For the “client connectivity” side of Slack’s architecture, WebSocket is effectively the only practical choice.

10.3 Netflix: Domain‑Driven gRPC in Practice

Netflix makes heavy use of gRPC for backend‑to‑backend communication and has extended it in domain‑specific ways.

-

FieldMasks: avoiding over‑fetching

In classic REST APIs, resources often contain large numbers of fields, and clients commonly fetch more data than they actually need.

Netflix leverages ProtobufFieldMasks so that clients can specify exactly which fields they want in a gRPC response.

This combines the efficiency of gRPC/Protobuf with GraphQL‑like flexibility in shaping responses. -

Aggregator services: multi‑source fan‑in

Netflix also builds aggregator services and uses gRPC’s bidirectional streaming:- The UI sends a single streaming request to an aggregator

- The aggregator concurrently calls recommendation, user, and video services via gRPC

- It merges responses into one coherent data stream and pushes it back to the client

This pattern preserves strong typing and high performance while dramatically simplifying data‑fetching logic on the frontend.

11. Conclusion and Strategic Decision Matrix

The protocol landscape is not a zero‑sum game. Modern architects should treat these protocols as specialized tools in the same toolbox, each suited for different kinds of problems.

11.1 Decision Framework

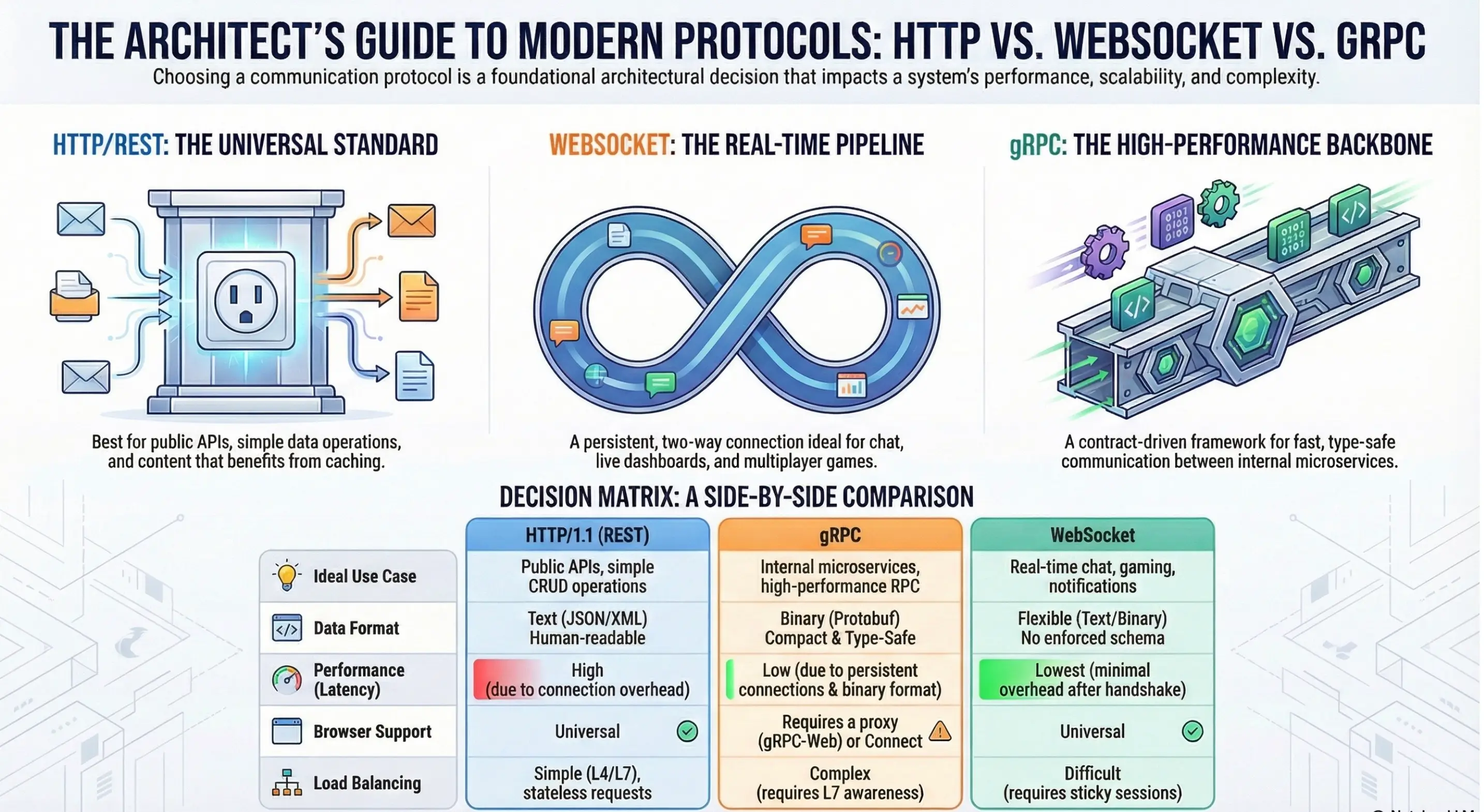

The table below summarizes HTTP/1.1 (REST), gRPC, and WebSocket across several important dimensions and can serve as an early‑stage design aid.

| Aspect | HTTP/1.1 (REST) | gRPC | WebSocket |

|---|---|---|---|

| Ideal use cases | Public APIs, simple CRUD, needs caching | Internal microservices, high performance, polyglot environments | Real‑time chat, games, live dashboards, notifications |

| Data format | Text (JSON/XML), verbose but easy to debug | Binary (Protobuf), compact and type‑safe | Flexible (text/binary), but no built‑in schema enforcement |

| Browser support | First‑class | Requires gRPC‑Web or Connect | First‑class |

| Caching | Native (ETag, Cache‑Control) | Harder; requires app‑level logic | Not applicable in practice |

| Load balancing | Simple L4/L7 is usually enough | Needs sophisticated L7 (per‑stream/per‑RPC) | Requires sticky sessions; hard to rebalance active connections |

| Typical latency | Highest (handshakes + text parsing + header bloat) | Low (multiplexing + binary) | Lowest (persistent pipe, tiny frame headers) |

From this matrix, it’s clear:

- No single protocol “wins” in all scenarios

- The key is to match each protocol to use case type, runtime environment, and team expertise

11.2 Looking Ahead

With HTTP/3 rollouts accelerating, a new wave of convergence is underway:

- gRPC over HTTP/3 (QUIC) promises to address mobile roaming issues where network changes would otherwise interrupt connections

- WebTransport aims to provide WebSocket‑like real‑time capabilities on top of QUIC, potentially unifying the transport layer for both RPC and event streams

A pragmatic set of guidelines for today’s architects:

- Internal service mesh: favor gRPC + Protobuf to maximize performance and type safety

- Public‑facing APIs: stick with REST/HTTP + JSON to maximize reach and ecosystem compatibility

- Real‑time features: reserve WebSocket (or, in the future, WebTransport) for the parts of the product where low‑latency, bidirectional interactions are central to the user experience, rather than sprinkling “real‑time” everywhere

In other words:

Use gRPC when you need efficient machine‑to‑machine communication, REST when you need broad accessibility, and WebSocket when you need real‑time, human‑facing interaction.

/ Tags

/ Related Posts

More →

Fix oxc-parser Module Error When Deploying Nuxt3 to Cloudflare

Learn how to fix the oxc-parser module not found error when deploying Nuxt3 apps to Cloudflare Pages

Fixing 'MessageChannel is not defined' Error When Deploying Astro 5 + React 19 to Cloudflare

A detailed analysis of the 'MessageChannel is not defined' error when deploying Astro 5 + React 19 projects to Cloudflare Pages, with complete solutions and in-depth technical insights